This case study describes a ransomware intrusion affecting an organization operating a hybrid IT/OT environment, where business systems were tightly coupled with operational processes.

The attacker, later attributed to Lynx ransomware operations, executed a fast-paced intrusion leveraging valid credentials, automated lateral movement, and large-scale credential abuse. Despite widespread compromise across the IT environment, critical operational processes remained partially functional—revealing important insights about both attacker tradecraft and system design.

During the investigation, multiple data sources were utilized to gain visibility into the environment and attacker activity. These included comprehensive endpoint artifacts such as Windows event logs, registry hives, scheduled tasks, prefetch files, and system metadata collected from over 50 hosts spanning both IT and OT networks. While the organization had begun implementing specialized OT monitoring solutions, including Claroty CTD, its deployment was still in progress and not fully operational at the time of the incident. Therefore, the primary analysis relied on traditional endpoint and network telemetry, which proved essential for reconstructing the attack timeline and understanding the lateral movement and persistence techniques employed by the adversary.

Although Claroty CTD was not fully deployed, its logs were leveraged as an initial guide to help focus the investigation. These early indicators provided valuable context on suspicious activity within the OT network, enabling the team to prioritize data collection and analysis efforts effectively.

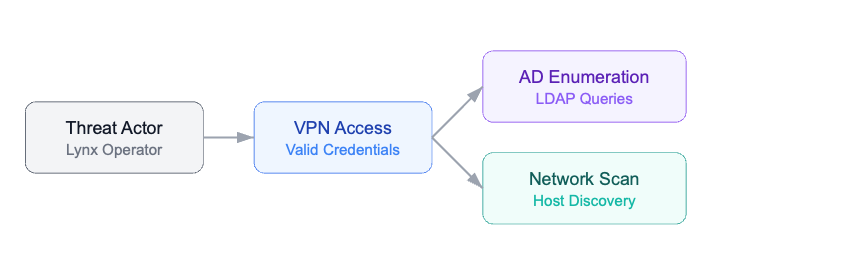

Phase 1 – Initial Access & Reconnaissance

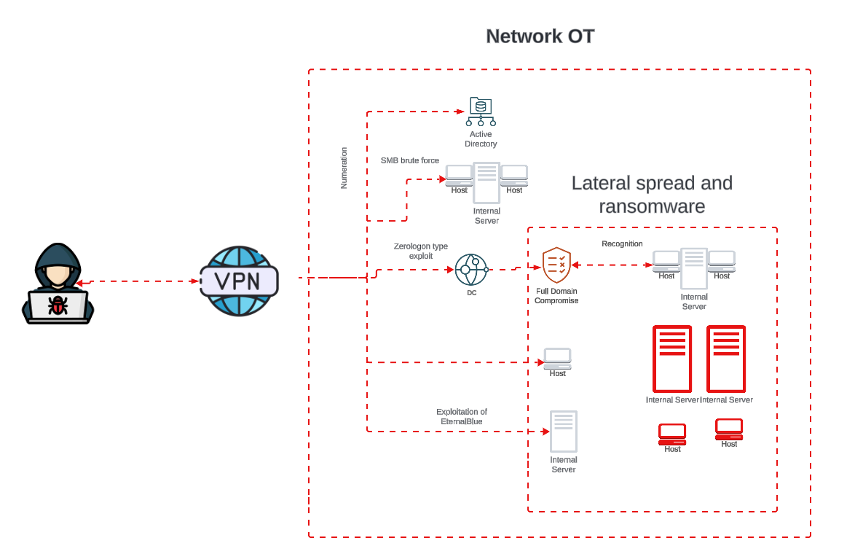

The intrusion originated through a remote access service (VPN) using valid credentials, indicating prior credential compromise (e.g., phishing, credential reuse, or infostealer activity).

Immediately after access, the threat actor initiated structured reconnaissance:

- Active Directory enumeration via LDAP queries to identify domain structure, users, groups, and privileged accounts.

- Network discovery using TCP port scanning and ICMP sweeps.

- Early focus on Domain Controllers, database servers, and critical infrastructure nodes.

This behavior reflects a goal-oriented intrusion, where the attacker rapidly prioritizes assets that enable privilege escalation and domain-wide visibility.

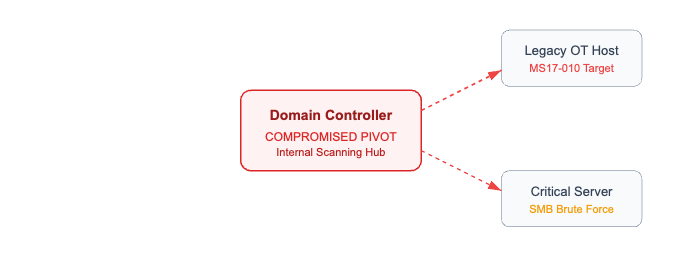

Phase 2 – Expansion & Exploitation

Following reconnaissance, the attacker transitioned into exploitation and access expansion. Observed behaviors included high-volume SMB authentication attempts characterized by password spraying and brute force patterns, the use of automated tooling to identify weak or reused credentials, and exploitation attempts consistent with SMB vulnerabilities. Notably, techniques aligned with MS17-010 (EternalBlue) were employed to target unpatched or legacy systems.

This phase resulted in the compromise of a Domain Controller, which became a critical turning point in the intrusion.

Once compromised, the Domain Controller was leveraged to:

- Perform internal reconnaissance at scale.

- Enumerate additional systems and trust relationships.

- Act as a pivot node for internal propagation.

This shift represents the transition from external access to full internal attack surface control.

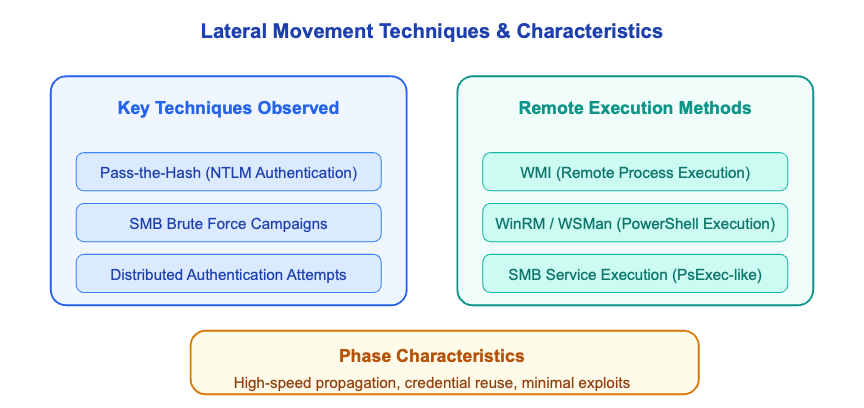

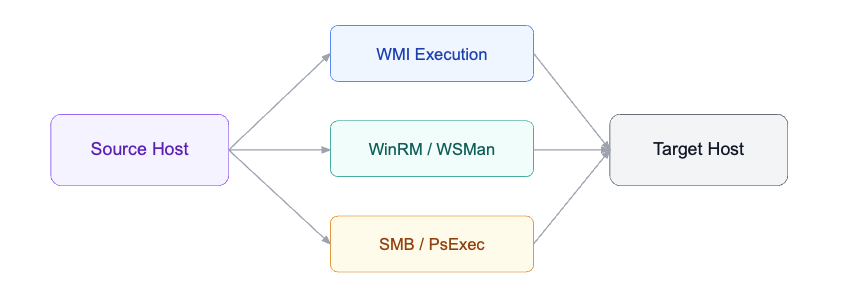

Phase 3 – Credential Abuse & Lateral Movement

With elevated privileges, the attacker moved into a highly automated lateral movement phase focused on credential reuse and remote execution.

Key techniques observed included Pass-the-Hash (PtH) attacks using NTLM authentication, ongoing SMB brute force campaigns targeting administrative access, and distributed authentication attempts across multiple systems. Remote execution was achieved through native Windows mechanisms such as Windows Management Instrumentation (WMI) for remote process execution, WinRM/WSMan for PowerShell-based command execution, and SMB-based service execution resembling PsExec behavior for payload deployment. This phase was characterized by high-speed propagation across the network, extensive reuse of administrative credentials across multiple systems, and minimal reliance on exploits after initial access, reflecting a shift towards “living off the land” tactics.

The attacker effectively transitioned into an automated lateral movement engine, compromising a large portion of the environment in a short time.

Phase 4 – Ransomware Deployment

The final phase involved coordinated deployment of the Lynx ransomware payload across compromised systems.

Execution characteristics included deployment via remote service creation using PsExec-like techniques, with simultaneous or near-simultaneous execution across multiple hosts. Additionally, ransom notes (README.txt) were distributed widely across systems and network shares to ensure visibility of the extortion demand.

Observed Lynx-specific behaviors:

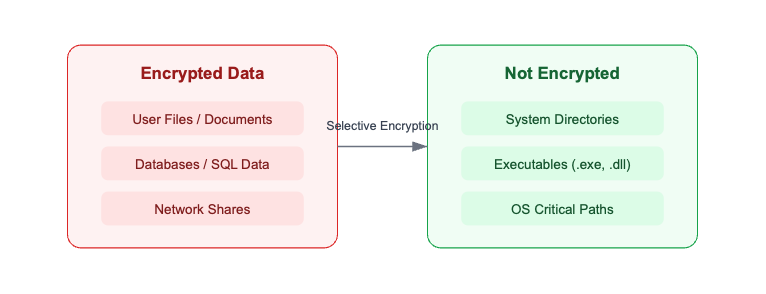

- Selective encryption strategy:

- Targeted: user data, databases, shared resources

- Excluded: system directories (e.g., Windows paths)

- Excluded: executable files (.exe, .dll, etc.)

- Likely process and service termination to unlock files prior to encryption (e.g., database services, backup agents)

- Capability to encrypt network shares, increasing impact across the environment

Systems remained operational at the OS level, but:

- Critical data was encrypted

- Business processes were disrupted

- Operational continuity was degraded rather than completely halted

Lightweight, “Legacy-Safe” Evidence Collection

The environment was operationally fragile: legacy hosts that were effectively “Held together with duct tape and baling wire,” limited headroom in CPU/RAM/disk, and a real risk that any heavy tooling could push systems over the edge. Internal IT had already attempted restarts on some machines, and a few did not come back online. Our collection strategy had to prioritize a minimum footprint.

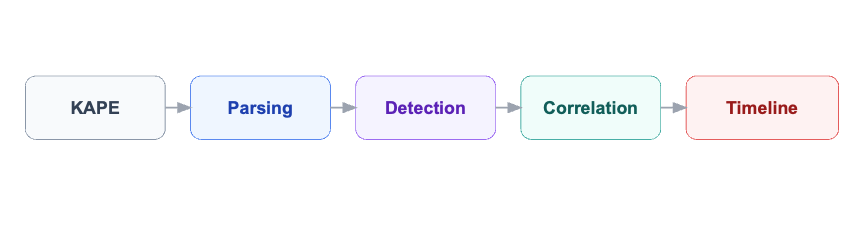

We designed a two-stage collection approach using a tiny bootstrap script for cmd.exe. This script connected to an improvised internal FTP server to download a tuned, minimal KAPE bundle. We avoided the full 300MB+ distribution, instead pushing a lightweight package of just a few megabytes. The output was automatically zipped by hostname and uploaded back to the FTP. Within hours, we had extracted the vital signs of the entire network without a single additional system failure.

How We Built the Tool: Architecture and Design

Once the collection was completed, the analyst disconnected from the internal network to ensure full isolation. However, we quickly encountered a major bottleneck: the sheer volume of forensic data collected from more than 50 hosts. Manual analysis was not feasible within the required response timeframe.

To address this, we developed an Offline Persistence Scanner, a Python-based analysis pipeline designed to process large-scale forensic artifacts efficiently and consistently.

The tool focuses on extracting and correlating key persistence and execution artifacts, including:

- Windows Registry hives

- Scheduled Tasks

- Startup folders and autoruns

- Prefetch files

- Jump Lists

- BAM/DAM artifacts

- Windows Event Logs (EVTX)

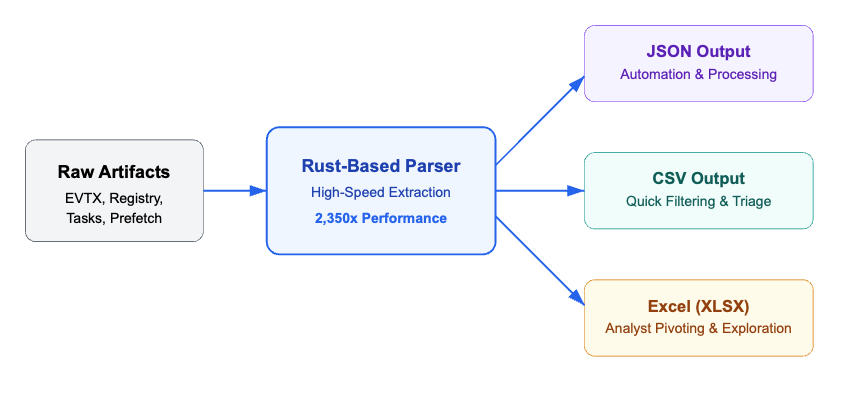

One of the main technical challenges was handling large EVTX files. Traditional Python-based parsers proved too slow for operational use. To overcome this, we integrated a compiled Rust-based EVTX parser (evtx_dump), achieving performance improvements of approximately 2,000+ times faster compared to standard approaches. This enabled us to process large Security logs (hundreds of MBs) in seconds instead of hours.

Data Normalization & Output Strategy

Beyond parsing, a key design decision was to normalize all extracted artifacts into structured, analysis-ready formats. The pipeline automatically converts parsed data into JSON for structured processing and automation, CSV for rapid filtering and bulk triage, and Excel (XLSX) for analyst-friendly exploration and data pivoting. This approach ensures that the same dataset can be efficiently consumed across different stages of the investigation, from automated processing to interactive analysis.

This multi-format output approach significantly enhanced investigation speed by enabling analysts to rapidly filter authentication events, process executions, and remote activity without the constraints of proprietary tools. By normalizing data into JSON, CSV, and Excel, the team was able to correlate artifacts across multiple hosts, facilitating rapid searches and real-time data pivoting. This flexibility not only accelerated the reconstruction of the attack timeline but also optimized technical collaboration, allowing for the immediate sharing of structured findings across different response teams.

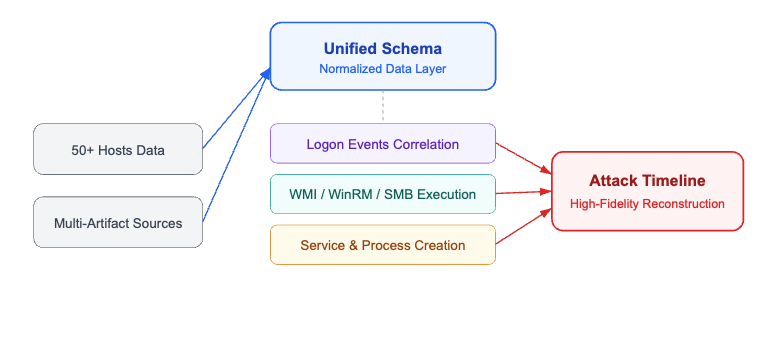

Timeline Reconstruction

By standardizing all artifacts into a unified schema, we were able to merge data from multiple sources and hosts into a single analytical layer. This enabled the correlation of key events such as logon activity, remote execution via WMI, WinRM, and SMB, service creation, and process execution. As a result, we generated a high-fidelity, cross-host timeline of attacker activity, providing a coherent and accurate reconstruction of the intrusion across the entire environment.

This timeline became the backbone of the investigation, enabling a precise reconstruction of the entire attack lifecycle. It allowed us to identify the initial access vector, trace the sequence of lateral movement across the environment, understand the progression of privilege escalation, and ultimately determine the exact point at which ransomware deployment occurred

Outcome

The combination of high-speed parsing and structured output enabled:

- Rapid triage of large forensic datasets

- Accurate reconstruction of attacker behavior

- Clear visibility into lateral movement patterns

- Reduction of analysis time from hours to minutes

Threat Actor Profile: The Lynx Group

Lynx is a double-extortion ransomware operation (encrypt + threaten to leak) that maintains a public leak site where victim data is posted to increase pressure during negotiations. Public reporting shows a strong presence of victims in the United States, with additional victims in other regions, and recurring impact across manufacturing, business services, technology, and transportation/logistics—a pattern consistent with financially motivated targeting of organizations where downtime creates immediate leverage.

Typical victim profile (what they go after)

Lynx victim listings and analysis suggest a preference for:

- Mid-to-large organizations with centralized AD, file servers, and business-critical applications.

- Operationally sensitive environments (manufacturing, logistics/transport) where disruption impacts revenue quickly.

- Networks with reachable backups and shared storage, enabling the operator to maximize blast radius and extortion value.

Common TTPs observed in public reporting (high-confidence behaviors)

Public technical reporting on Lynx highlights a set of behaviors that align closely with a “classic enterprise ransomware runbook”:

- Ransom note delivery and user-facing intimidation: Lynx is reported to drop a ransom note commonly named README.txt, and may modify visual indicators (e.g., wallpaper) to ensure the incident is immediately visible to users and administrators.

- Selective encryption logic (avoid bricking systems): Lynx has been reported to exclude certain system directories (e.g., Windows, Program Files, AppData, recycle bin) and avoid encrypting common binary extensions such as .exe, .dll, and .msi. In practice, this means data/config/scripts are often impacted, while core executables may remain intact—an important detail when explaining scenarios where operational applications continue running even as data is encrypted.

- Process killing and service stopping to maximize impact: Lynx is reported to terminate processes and stop services associated with databases, email, and backup/recovery tooling (e.g., SQL/Exchange/Veeam-style targets). This increases encryption effectiveness by unlocking files and simultaneously degrades recovery options.

- Network share encryption capability: Reporting indicates Lynx is capable of encrypting network shares, increasing the likelihood of multi-system and multi-site impact in environments with shared storage.

ATT&CK technique mapping (for reporting and internal documentation)

The following ATT&CK techniques are commonly applicable when documenting Lynx-style intrusions and encryption events:

- T1486 – Data Encrypted for Impact (core ransomware outcome)

- T1489 – Service Stop (stopping services that block encryption or enable recovery)

- T1490 – Inhibit System Recovery (actions that reduce recovery options, often paired with service disruption)

- T1059 – Command and Scripting Interpreter (operator automation and execution control)

- T1083 – File and Directory Discovery (identifying what to encrypt)

- T1057 – Process Discovery (identifying processes to terminate prior to encryption)

- T1021.002 – SMB/Windows Admin Shares (common in enterprise lateral movement patterns)

- T1047 – Windows Management Instrumentation (WMI) (remote execution / admin activity)

- T1021.006 – Windows Remote Management (WinRM) (remote execution via WSMan/WinRM)

- T1569.002 – Service Execution (e.g., PsExec-like execution patterns)

Why Did Operations Keep Running?

One of the most critical questions during this incident was: why did the logistics operation never stop? Warehouses kept moving, fleet management stayed online, and operational processes continued uninterrupted — even as domain controllers and database servers were being encrypted around them.

Our theory, supported by the forensic evidence collected, comes down to how the OT software was architected. The operational systems running the core logistics environment were built on a model common in legacy OT deployments: standalone executables and compiled binaries installed directly under C:\ — not in user directories, not in network shares. Just flat .exe and supporting binary files sitting in fixed paths on local disk.

This matters because Lynx — like most modern ransomware — targets file extensions. Their encryption routines are tuned to go after documents, databases, configuration files, scripts, and data stores (.docx, .xlsx, .mdb, .bak, .sql, .cfg, .ps1, .bat, .vbs, .py, etc.). And that last part is worth emphasizing: scripts were encrypted. Any .bat, .ps1, or automation script in the environment was hit. Compiled binaries and executables (.exe, .dll) are typically excluded from encryption to avoid rendering the host completely unbootable — a ransomware operator’s worst outcome, since a bricked machine can’t display a ransom note or reach a payment portal.

The result was a clear and observable split in the forensic evidence:

- Survived: .exe and .dll binaries — the OT operational software kept running.

- Encrypted: Scripts (.bat, .ps1, .vbs), configuration files, databases, documents — anything text-based or data-oriented was hit.

This accidental resilience meant the core logistics processes never stopped — but it also meant that any automation, scheduled maintenance scripts, or operational scripting layer built on top of those binaries was wiped. The OT software ran, but it ran blind: no supporting scripts, no automated routines, no configuration management.

This is not a security control. This is luck.

The same architectural pattern that saved operations this time could just as easily have been the attack vector. Unmanaged binaries in flat directory structures, with no integrity monitoring, no allowlisting, and no EDR, are a prime target for binary replacement or DLL hijacking attacks. And next time, a more aggressive ransomware variant may simply choose to encrypt everything — including .exe files — accepting the risk of an unbootable host in exchange for maximum leverage.

Takeaways

- One weak seasonal password was the entry point. MFA on VPN is non-negotiable.

- Legacy OT environments without EDR are forensically blind. KAPE + offline analysis was the only option.

- The Pass-the-Hash campaign shows how fast credential abuse scales. NTLM should be restricted.

- Building the right tool for the environment matters. The Offline Persistence Scanner saved days of manual work.

- If your OT environment survived a ransomware event because the attacker “didn’t bother” with your executables, that’s not resilience — that’s a near miss.